What is rendering in games?

When we talk about rendering, we are not referring to the way a computer has to transform information into scenes or images in 2D or 3D. In addition, you will also have heard the term rasterization, which is a more specific way of calling the process of transforming vectors into pixel-based images. Well, in this article we are going to explain what it is and everything you need to know…

What is rendering?

As you may well know, within the GPU or CPU architecture, data can be processed to convert certain information values into a graphic image. To do this, these processing units will take a set of instructions and execute them multiple times on different data to obtain the necessary values that are needed to obtain spatial data, textures, colors, lighting, and other effects.

Rendering types

There are several types of rendering, as you can imagine. And these types can be classified according to various factors:

CPU rendering

One way to do the rendering is by using the CPU instead of the GPU. In fact, a few years ago, CPUs were also in charge of this, before the first graphics accelerators or graphics cards began to be released.

As you know, the CPU especially excels at handling heavier tasks, while the GPU excels at handling many simple tasks simultaneously. Therefore, the most suitable unit for rendering is a GPU, although that does not mean that the CPU is not capable of it.

GPUs are noticeably faster than CPUs for certain tasks, such as rendering graphics, due to the large amount of data that has to be handled simultaneously. Therefore, when it comes to rendering, the CPU can do the trick, but ideally it would be done by the GPU. In fact, many of the current rendering software directly use the GPU and do not give an option to use the CPU for it.

GPU rendering

On the other hand, rendering can also be done by the GPU, which is more efficient and with higher performance than by CPU. In addition, these units not only have more execution cores, they also have specific units specially created to speed up graphics-related tasks and obtain better results.

Although they do not have direct access to the storage media and main RAM, they often have dedicated VRAM. Although some GPUs, such as iGPUs or APUs, do have direct access to these memories. In addition, some newly released technologies are also helping to address this impediment. For example, technologies like NVIDIA GPUDirect and AMD Smart Access Storage.

The usage of CPU and GPU Rendering depends entirely on the rendering needs of the consumer. The architecture industry can benefit more from traditional CPU rendering, which takes more time, but generally produces higher quality images, even though most work is done through the GPU for its advantages…

Hardware vs software rendering

Software rendering refers to the process of generating an image from a model through software on the CPU, regardless of whether or not there is a hardware graphics accelerator on the system. Software rendering is classified as real-time software rendering, which is used to interactively render a scene in applications such as games, videos, images, etc. But this kind of rendering could take hours and hours compared to hardware rendering.

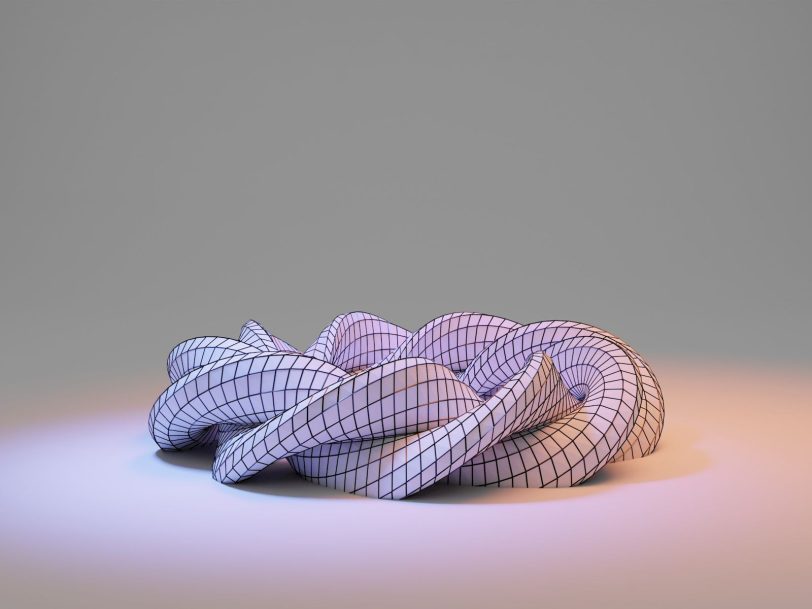

On the other hand, there is hardware rendering, which is rendering that uses dedicated hardware, such as GPU rendering, which is capable of triangulating from given points or vectors, generating polygons and complex shapes, applying textures, coloring , positioning objects in a scene, and other effects such as ray-traced lighting, etc. In this case, the performance is superior.